What Is Happy Horse AI Video Generator? The 2026 AI Video Breakthrough Explained

Key Takeaways

- Happy Horse 1.0 is a 15B-parameter open-source unified Transformer model that jointly generates high-quality video and synchronized audio from text or image prompts.

- It currently leads the Artificial Analysis AI Video Arena with an Elo score of 1333, outperforming Seedance 2.0 in motion quality, prompt adherence, and character consistency.

- Key strengths include native audio generation, multilingual lip-sync, 1080p output, and exceptional physics/motion realism that reduces common AI video artifacts like floaty movements or broken transitions.

- Available via multiple web platforms with free starter credits; also fully open-source for self-hosting, fine-tuning, and commercial use.

- Ideal for creators, marketers, and developers seeking fast, professional text-to-video and image-to-video results without separate audio tools.

What Is Happy Horse AI Video Generator?

Happy Horse AI Video Generator, powered by the Happy Horse 1.0 model, represents a significant advancement in generative AI for video content. Released in early 2026, this multimodal system transforms text descriptions or static images into dynamic, cinematic videos—complete with synchronized sound—in seconds.

Unlike traditional AI video tools that generate visuals first and add audio separately, Happy Horse employs a unified architecture. This integrated approach ensures better temporal alignment between visuals and sound, resulting in more coherent and professional outputs.

The model supports both text-to-video and image-to-video workflows, making it versatile for quick concept visualization or animating existing assets. Community feedback and early benchmarks highlight its ability to handle complex scenes with natural motion, accurate physics, and high prompt fidelity.

Technical Architecture Behind Happy Horse 1.0

At its core, Happy Horse 1.0 features a 15-billion-parameter unified Transformer with approximately 40 layers of self-attention. This design enables joint modeling of video frames and audio waveforms within a single forward pass.

Key technical highlights:

- Multimodal Integration: Video and audio are generated together, allowing the model to condition audio on visual dynamics (e.g., lip movements matching spoken words or sound effects syncing with actions).

- Multilingual Lip-Sync: Native support for multiple languages with accurate phonetic synchronization, reducing the need for post-production dubbing.

- Resolution and Quality: Outputs up to 1080p with options for super-resolution modules in the open-source release.

- Inference Optimizations: Includes a distilled model variant for faster generation on consumer hardware, alongside full base model support for maximum quality.

This architecture addresses longstanding challenges in AI video generation, such as inconsistent character appearance across frames and unrealistic motion trajectories. Analysis of generated clips shows superior handling of long-sequence coherence, such as gradual environmental changes over simulated time.

How Happy Horse AI Video Generator Works

Using the tool is straightforward on hosted platforms:

- Input Preparation: Enter a detailed text prompt describing the scene, action, style, and mood. For image-to-video, upload a reference image and optionally add a text prompt for motion guidance.

- Generation: The model processes the input through its unified Transformer, producing video frames and audio track simultaneously.

- Output: Users receive a downloadable MP4 file, typically in 5–10 seconds for standard clips, with 1080p resolution and embedded audio.

Advanced users can leverage reference images for character or style consistency, negative prompts to avoid unwanted elements, and parameter tweaks for duration, aspect ratio, or motion intensity.

Example Prompt Structure for Best Results:

A serene mountain lake at dawn, mist rising from the water, a lone kayaker paddling smoothly across the frame. Cinematic lighting, realistic water physics, gentle bird sounds and paddle splashes. 1080p, smooth camera pan.

Key Features and Capabilities

- Native Audio Generation: Automatic soundtracks, effects, and dialogue audio that sync precisely with visuals.

- High Motion Quality: Benchmarks indicate reduced artifacts; movements follow realistic physics rather than “floaty” or erratic patterns common in earlier models.

- Prompt Obedience: Strong adherence to complex instructions, including multi-shot storytelling and specific stylistic references (e.g., “in the style of a Hollywood blockbuster”).

- Character and Object Consistency: Improved temporal consistency, minimizing morphing or identity shifts between frames.

- Open-Source Flexibility: Full model weights, inference code, and fine-tuning scripts available, enabling custom deployments or domain-specific adaptations.

- Commercial Rights: Explicitly supports commercial use, appealing to businesses and content studios.

These features position Happy Horse as particularly strong for short-form social content, marketing videos, educational explainers, and prototype filmmaking.

Benchmarks and Performance Comparison

According to Artificial Analysis data, Happy Horse 1.0 achieved an Elo rating of 1333 on the AI Video Arena, surpassing Seedance 2.0. It excels in:

- Motion Realism and Physics

- Visual Fidelity and Detail Preservation

- Audio-Visual Synchronization

- Prompt Following Accuracy

Community tests reveal advantages in handling challenging scenarios, such as intricate human movements, environmental interactions, or extended temporal sequences. For instance, prompts involving gradual transformations (e.g., flowers blooming and wilting) produce more coherent results than many closed-source competitors.

While exact numbers vary by prompt complexity, generation speeds are competitive, often completing clips faster than queue-heavy alternatives. Open-source nature further allows optimization for specific hardware, potentially lowering costs for high-volume users.

Who Should Use Happy Horse AI?

- Content Creators & Social Media Managers: Rapid production of engaging short videos for YouTube, TikTok, or Instagram Reels.

- Marketers and Businesses: Cost-effective ad creatives, product demos, and campaign visuals with professional polish.

- Educators and Trainers: Animated explainers with synchronized narration, including multilingual versions.

- Developers and Researchers: Self-hosted deployments for custom applications or further model research.

Beginners benefit from intuitive web interfaces with free starter credits, while advanced users appreciate the open-source codebase for deeper customization.

Getting Started with Happy Horse AI Video Generator

Several platforms host the model with user-friendly interfaces:

- Sign up for free credits (typically 10+ on initial registration).

- Experiment with simple prompts to understand model strengths.

- Upgrade to paid plans for higher credit allowances and priority generation.

Advanced Tips:

- Use highly descriptive prompts including camera angles, lighting, and audio cues for optimal results.

- Combine reference images with text for consistent characters across multiple clips.

- For self-hosting: Follow official inference guides; leverage distilled models on GPUs with at least 24GB VRAM for reasonable speeds.

Common Pitfalls and Edge Cases

- Overly Complex Prompts: Extremely long or contradictory instructions may reduce quality—break into focused scenes instead.

- Hardware Demands for Self-Hosting: Full 15B model requires significant compute; start with distilled versions or cloud instances.

- Creative Control Limits: While prompt adherence is strong, fine details like exact lip-sync in rare dialects may still need minor post-editing.

- Content Moderation: As with most generative tools, outputs respect platform policies; avoid prompts that violate terms.

Testing edge cases, such as fast-action sports or abstract artistic styles, shows Happy Horse handles realistic scenarios particularly well, though highly stylized or surreal content may vary.

Conclusion

Happy Horse 1.0 stands out as a leading AI video generator in 2026, combining technical innovation with practical usability. Its unified video-audio generation, top benchmark performance, and open-source availability make it a powerful choice for anyone seeking high-quality, efficient video creation.

Whether producing quick social clips or exploring advanced custom workflows, Happy Horse delivers cinematic results with minimal friction. Explore the official platforms today to generate your first video and experience the difference in motion quality and synchronization.

Start creating professional AI videos now—sign up for free credits and transform your ideas into reality.

Continue Reading

More articles connected to the same themes, protocols, and tools.

What Is OC Maker? The AI Tool Revolutionizing Original Character Creation in 2026

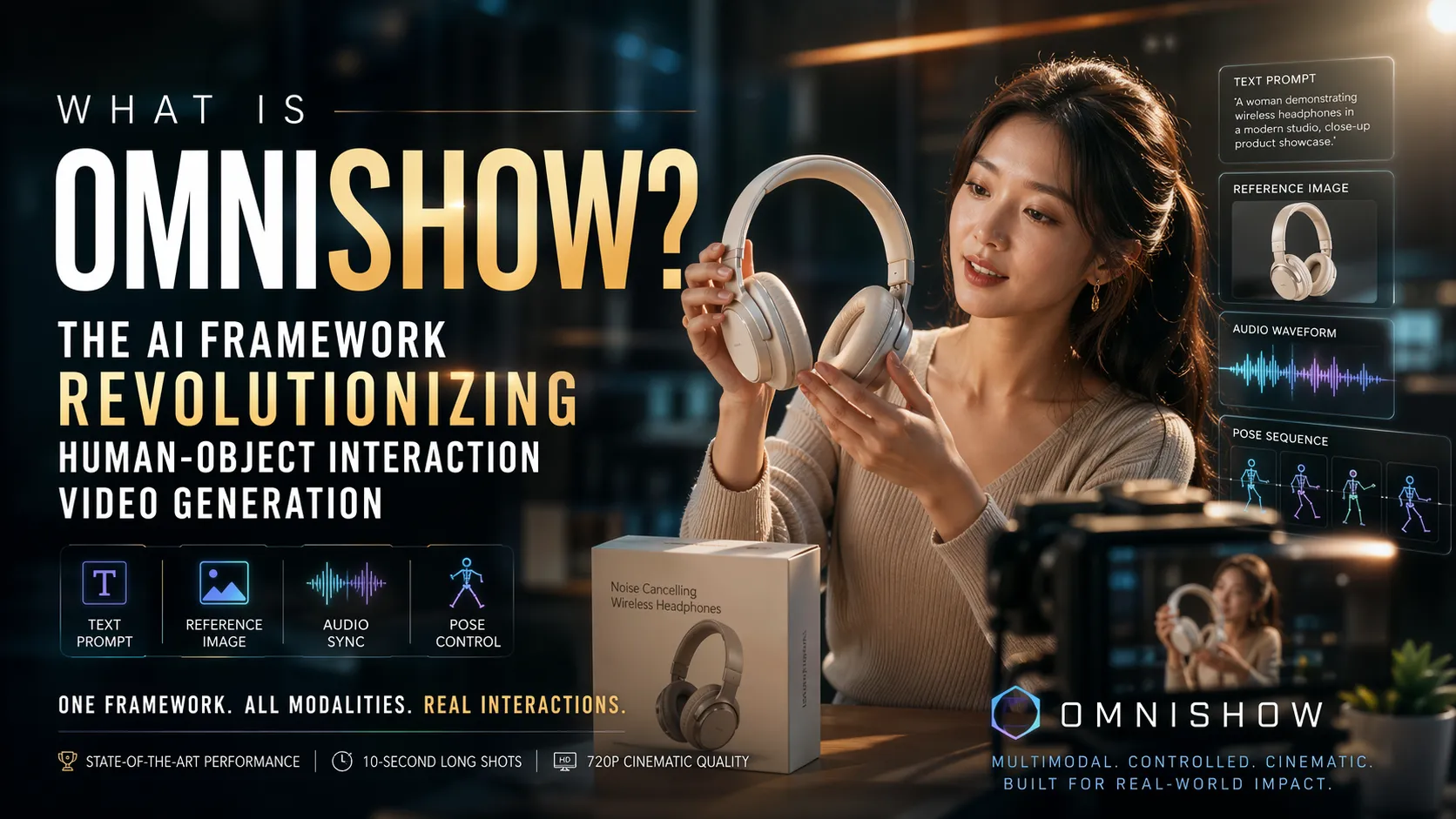

What Is OmniShow? The AI Framework Revolutionizing Human-Object Interaction Video Generation

Ostris AI Toolkit Guide: The Practical LoRA Training Suite for FLUX, Qwen, Z-Image, Wan, and Modern Diffusion Models

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.