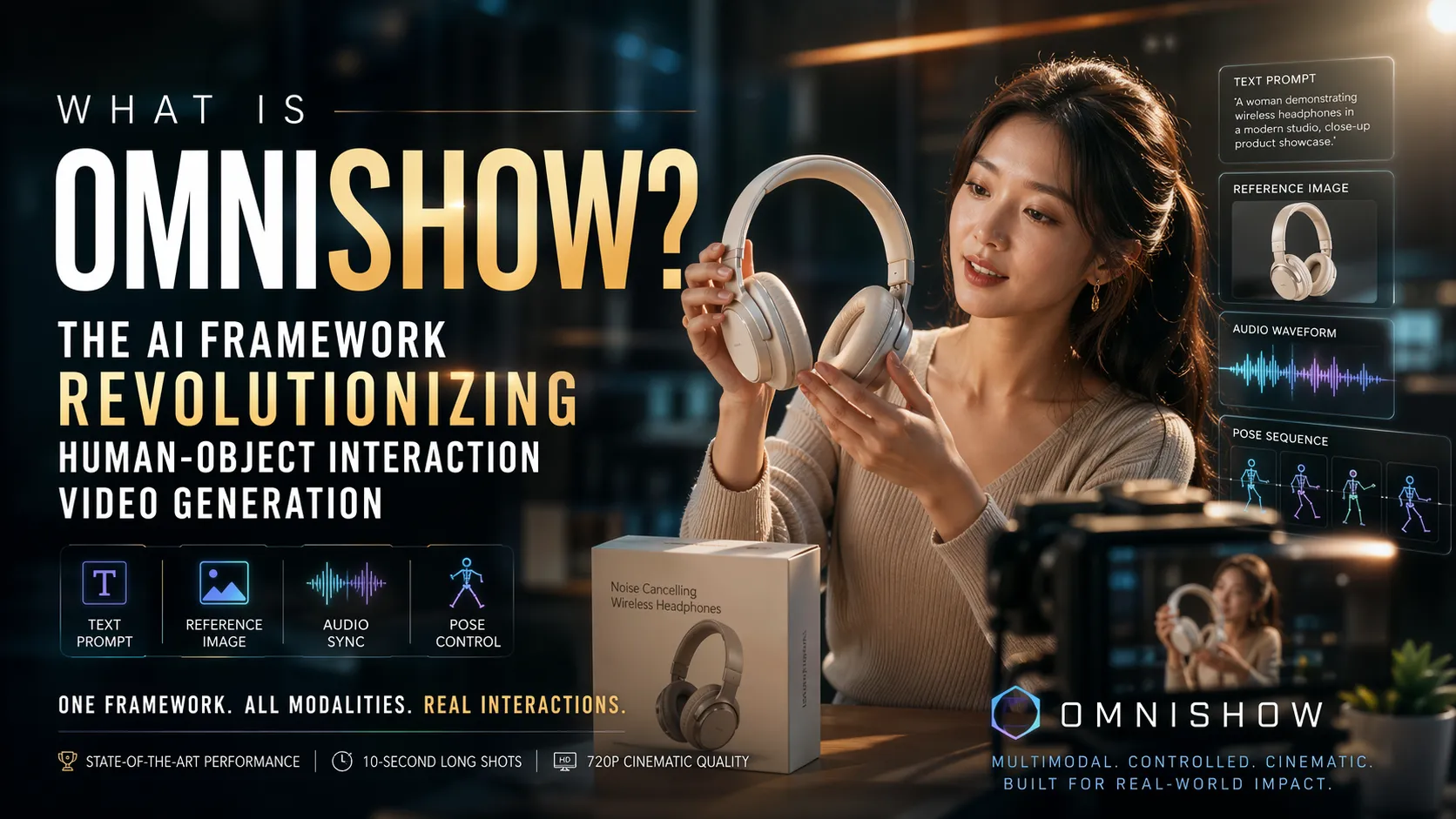

What Is OmniShow? The AI Framework Revolutionizing Human-Object Interaction Video Generation

Key Takeaways

- OmniShow is an end-to-end multimodal AI framework for Human-Object Interaction Video Generation (HOIVG), unifying text prompts, reference images, audio, and pose sequences into high-fidelity videos with realistic human-product interactions.

- Built on a 12.3B-parameter Multimodal Diffusion Transformer, it introduces Unified Channel-wise Conditioning and Gated Local-Context Attention to solve controllability-quality trade-offs and ensure precise synchronization.

- Benchmarks on the newly introduced HOIVG-Bench show OmniShow achieving state-of-the-art results across R2V, RA2V, RP2V, and unique RAP2V tasks, outperforming models like HunyuanCustom, HuMo-17B, VACE, and Phantom-14B in appearance fidelity, motion coherence, and audio-visual sync.

- Practical applications excel in e-commerce, enabling studio-quality product demonstration videos in minutes without physical shoots, with support for up to 10-second long shots and 720p output.

- Advanced training via Decoupled-Then-Joint strategy addresses data scarcity, delivering industry-grade physical plausibility, identity preservation, and natural grasping/contact dynamics.

What Is OmniShow?

OmniShow is a cutting-edge AI framework designed specifically for Human-Object Interaction Video Generation (HOIVG). It synthesizes realistic videos of humans interacting with objects—such as demonstrating, grasping, or using products—while conditioning on multiple inputs simultaneously: text for semantics, reference images for visual fidelity, audio for synchronization, and pose for motion control.

Released in April 2026 by researchers affiliated with ByteDance, OmniShow addresses a critical gap in existing video generation tools. Traditional models often handle only one or two modalities and struggle with stable, physically plausible interactions over time. OmniShow unifies all four in a single end-to-end system, producing cinematic results suitable for e-commerce, short-form content, and interactive entertainment.

Analysis of the framework shows it prioritizes real-world utility: outputs maintain consistent character and object appearance, natural motion dynamics, and robust contact physics, even in complex scenarios.

Core Features of OmniShow

OmniShow delivers multimodal control through four primary generation modes:

- Reference-to-Video (R2V): Generates high-fidelity HOI videos from text and reference images, excelling in product appearance preservation.

- Reference + Audio-to-Video (RA2V): Adds audio synchronization for lip movements, gestures, and expressive talking/singing avatars.

- Reference + Pose-to-Video (RP2V): Incorporates pose sequences for precise motion trajectories while ensuring authentic object interactions.

- Full Multimodal (RAP2V): Combines all inputs for the most controllable outputs—industry-first joint conditioning.

Additional capabilities include:

- Long-shot support up to 10 seconds at 24fps and 720p resolution.

- Physical realism: Stable grasping, minimal penetrations, and coherent shadows/lighting.

- Identity preservation: Consistent human and object appearance across frames.

- Cloud-optimized workflows for e-commerce platforms like Shopify, Amazon, and TikTok Shop.

These features make OmniShow particularly valuable for scalable content creation where precision matters.

How OmniShow Works: Technical Architecture

OmniShow builds on the 12B-parameter Waver 1.0 Multimodal Diffusion Transformer (MMDiT) using latent diffusion with flow matching. Video is compressed via VAE into latent tokens, then denoised iteratively while conditioned on multimodal inputs.

Key Innovations

- Unified Channel-wise Conditioning: Reference images and pose sequences are VAE-encoded and injected directly into feature channels via concatenation with noisy video tokens and pseudo-frame tokens. Binary masks control activation, paired with a reference reconstruction loss. This preserves high visual quality without the degradation common in adapter-based methods.

- Gated Local-Context Attention: Audio features (extracted via Wav2Vec 2.0) are packed with sliding-window context (size 5) and injected through masked attention in dual-stream blocks. A learnable gating vector stabilizes training and modulates influence, ensuring precise action-sound alignment with only a 2.5% model size increase.

- Decoupled-Then-Joint Training: To handle data scarcity for full multimodal pairs, separate R2V and A2V models are trained on heterogeneous datasets, then merged (6:4 ratio favoring audio sensitivity). Joint fine-tuning on RA2V and high-quality RAP2V data unlocks emergent capabilities without overfitting.

The pipeline processes inputs in parallel, fuses them cross-modally, and refines via diffusion—resulting in outputs that feel director-controlled rather than generically animated.

Performance Benchmarks and Comparisons

Benchmarks on the custom HOIVG-Bench (135 diverse 5-second clips with human/object references, poses, and audio) demonstrate OmniShow's superiority:

- R2V: Leads in reference consistency (FaceSim 0.759, NexusScore 0.876) and overall quality while maintaining strong text alignment.

- RA2V & RP2V: Outperforms HunyuanCustom, HuMo-17B, AnchorCrafter, and VACE in sync metrics (Sync-C/Sync-D), pose accuracy (AKD/PCK), and video quality (AES/IQA).

- RAP2V: Unique full support; beats cascaded baselines across nearly all metrics, including motion coherence and physical plausibility.

Community and research feedback highlights reduced artifacts in complex interactions compared to single-modal or cascaded approaches. Long-shot continuity and physics compliance stand out as differentiators.

Real-World Applications and E-Commerce Impact

OmniShow shines in practical scenarios:

- E-commerce product demos: Create professional unboxing, usage, or try-on videos from product photos and voiceovers—cutting production costs from thousands to under $10 per video.

- Marketing content: Generate UGC-style shorts with AI presenters demonstrating features naturally.

- Creative workflows: Remix existing videos, swap objects, or animate avatars with audio-driven expressions.

Brands benefit from faster iteration, higher conversion rates (e.g., 67% CTR uplift on social), and consistent branding without studios or models.

Advanced Tips for Optimal Results

To maximize quality:

- Use high-resolution, front-facing reference images with neutral lighting for best identity preservation.

- Provide clear, concise text prompts describing actions and camera angles; pair with precise pose sequences for complex hand-object interactions.

- For audio, use clean voiceovers at matching fps; test short clips first to refine synchronization.

- Leverage RAP2V mode for edge cases like multi-object handling or camera movement—start with R2V then layer conditions iteratively.

Common Pitfalls and How to Avoid Them

- Conflicting inputs: Overly complex poses with mismatched audio can cause minor blur or artifacts in intense motion; resolve by simplifying one modality initially.

- Data scarcity effects: While training mitigates this, low-quality references reduce fidelity—always validate inputs against HOIVG-Bench-style standards.

- Short-clip bias in evaluation: Real outputs may vary beyond 5 seconds; generate and review full sequences for temporal consistency.

- Over-reliance on defaults: Custom gating and mask tuning in advanced setups yield better results than zero-shot usage.

Addressing these ensures reliable, production-ready videos.

Conclusion

OmniShow represents a significant leap in controllable video generation, making professional human-object interaction content accessible at scale. Its unified multimodal approach, backed by rigorous innovations and benchmarks, sets a new standard for realism and practicality in AI video tools.

For e-commerce teams, creators, or researchers ready to transform video production, explore the official project page or commercial implementations to start generating cinematic HOI videos today. The future of product storytelling is here—one precise, multimodal prompt at a time.

Continue Reading

More articles connected to the same themes, protocols, and tools.

Was ist OmniShow? Das KI-Framework, das die Generierung von Human-Object-Interaction-Videos revolutioniert

What Is OC Maker? The AI Tool Revolutionizing Original Character Creation in 2026

O que é OmniShow? O Framework de IA que Revoluciona a Geração de Vídeos de Interação Humano-Objeto

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.