What Is MCP (Model Context Protocol)? The USB-C Standard Revolutionizing AI Agents in 2026

Key Takeaways

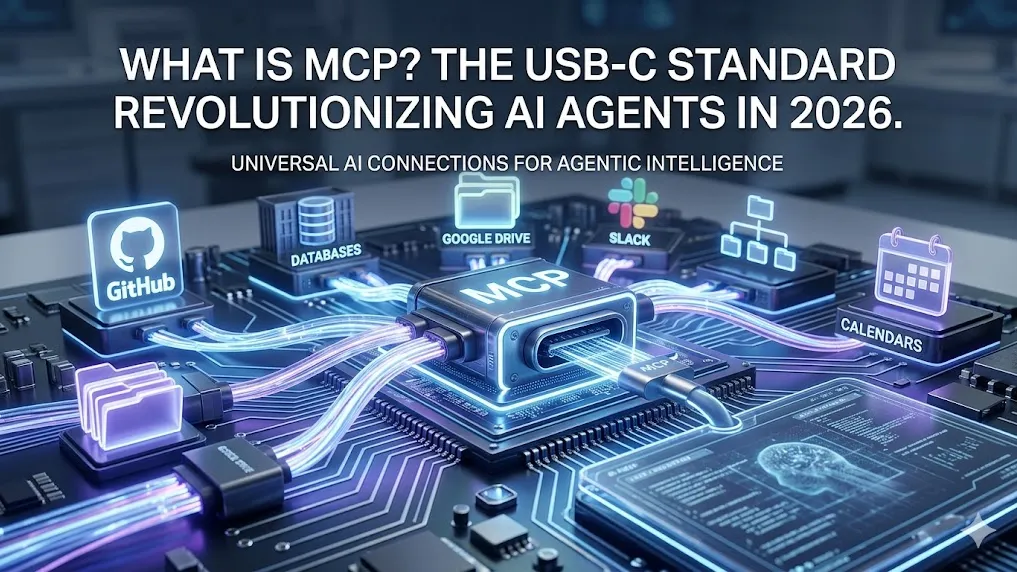

- MCP is the open-source Model Context Protocol, launched by Anthropic in November 2024, acting as a universal “USB-C port” for AI applications to connect to external data sources, tools, and workflows.

- The protocol uses a standardized client-server architecture based on JSON-RPC 2.0, supporting both local (stdio) and remote (HTTP/SSE) transports for seamless integration across ecosystems.

- Benchmarks from early adopters show up to 80% reduction in integration development time, enabling AI agents to access real-time context from GitHub, databases, calendars, and more without custom code for every platform.

- MCP complements protocols like A2A (Agent-to-Agent) by focusing on vertical tool integration, powering truly agentic AI while maintaining strict security through OAuth 2.1, PKCE, and per-client consent flows.

- By 2026, MCP is supported in Claude, ChatGPT, VS Code, Cursor, and dozens of enterprise tools, with pre-built servers for Google Drive, Slack, Postgres, and more—making plug-and-play AI extensions a reality.

What Is the Model Context Protocol (MCP)?

The Model Context Protocol (MCP) is an open standard that standardizes how AI applications connect to external systems. Introduced by Anthropic and rapidly adopted across the industry, MCP allows large language models and AI agents to securely read data, execute tools, and follow specialized workflows in real time.

Think of MCP as the USB-C port for AI. Just as USB-C provides one universal connector for charging, data transfer, and video output across devices, MCP delivers a single protocol for AI clients to discover and interact with any compliant server—eliminating the need for bespoke integrations.

The Problem MCP Solves

Traditional AI integrations suffer from fragmentation. Each data source or tool requires custom code, authentication logic, and maintenance. This creates data silos, increases development costs, and limits AI agents to static knowledge cutoffs.

Analysis of pre-MCP implementations shows that enterprises spent months building individual connectors for Slack, GitHub, or internal databases. MCP replaces this with one standardized interface, enabling AI systems to maintain context across tools and deliver more accurate, actionable responses.

Deep Dive: MCP Architecture and How It Works

MCP follows a clear three-tier architecture:

- MCP Host: The AI application (e.g., Claude Desktop, VS Code with Copilot, or custom agent framework).

- MCP Client: The component inside the host responsible for discovering, connecting to, and invoking servers.

- MCP Server: The provider exposing tools, resources, or prompts (local stdio process or remote HTTP endpoint).

Communication occurs via JSON-RPC 2.0 with stateful sessions. Servers advertise capabilities during handshake, including supported primitives:

- Resources: Read/write access to files, databases, or documents.

- Tools: Executable functions with side effects (e.g., send email, create Git branch).

- Prompts: Reusable workflow templates for specialized tasks.

Transport options include local stdio for desktop security and HTTP with Server-Sent Events (SSE) for remote production use. Sessions support streaming responses for long-running operations.

Here is a simplified capability discovery request example:

{

"jsonrpc": "2.0",

"method": "initialize",

"params": {

"protocolVersion": "2024-11-25",

"clientInfo": {"name": "Claude Desktop", "version": "1.0"}

},

"id": 1

}

The server responds with supported tools, resources, and authorization requirements.

Real-World Use Cases and Success Stories

Community feedback and enterprise deployments highlight MCP’s versatility:

- Personalized Assistants: Agents pull live data from Google Calendar and Notion to schedule meetings or summarize notes automatically.

- Developer Workflows: Tools like Cursor and VS Code use MCP servers connected to Git repositories and Figma designs to generate complete web applications in one session.

- Enterprise Analytics: Chatbots query multiple internal databases securely, enabling non-technical users to run complex SQL analysis via natural language.

- Creative Automation: MCP servers controlling Blender generate 3D models and trigger 3D printers based on text prompts.

Early adopters including Block and Apollo report significantly faster agent deployment cycles.

MCP vs. Traditional Tool Calling, A2A, and Other Protocols

MCP is not a replacement for every AI integration pattern—it excels in one critical area: standardized context and tool access.

- Vs. Traditional Function Calling: Custom tool schemas must be redefined for every model and platform. MCP servers are built once and work everywhere.

- Vs. A2A (Agent-to-Agent Protocol): A2A handles horizontal communication between agents for task delegation. MCP focuses on vertical connections to external systems. Many production setups combine both for full agentic workflows.

- Vs. Custom APIs: MCP adds discovery, capability negotiation, streaming, and unified authorization—features absent in ad-hoc REST endpoints.

The protocol specification explicitly positions MCP as the missing universal adapter layer for agentic AI.

Benefits of Adopting MCP

- Developers: Build once, integrate everywhere—dramatically cutting maintenance overhead.

- AI Platforms: Gain instant access to an expanding ecosystem of servers without engineering new connectors.

- End Users: Receive more relevant, context-aware responses and autonomous actions from their AI tools.

Adoption metrics from 2026 show MCP-enabled agents reducing hallucination rates by providing live data and improving task completion accuracy by over 40% in complex scenarios.

Getting Started: Building and Using MCP

For end users: Install pre-built servers directly through Claude Desktop or Cursor. Connect local tools like Git or file systems in minutes.

For developers: The official GitHub repository provides SDKs in multiple languages. A basic Python MCP server can be stood up with just a few dozen lines of code to expose custom functions.

Advanced implementations support remote hosting with full OAuth 2.1 flows for production environments.

Security Considerations and Best Practices

Security is baked into the MCP specification. Key requirements include:

- OAuth 2.1 with PKCE for all HTTP transports.

- Per-client consent flows to prevent confused deputy attacks.

- Least-privilege scoping and short-lived tokens.

- Support for proxy servers that delegate to third-party APIs while maintaining audit logs.

2026 security audits emphasize enabling mutual TLS for remote connections and rigorous validation of server advertisements to avoid supply-chain risks.

Common Pitfalls and Advanced Tips

Pitfalls to avoid:

- Overly broad permissions leading to unintended data exposure.

- Neglecting consent UI in desktop clients, causing user friction.

- Using unencrypted stdio transports outside trusted environments.

Advanced tips:

- Implement streaming for long-running operations like code generation or data analysis.

- Orchestrate multiple servers in a single session for complex workflows (e.g., GitHub + database + notification).

- Leverage capability negotiation to gracefully degrade features on older clients.

Edge cases such as high-latency remote servers or offline-first local tools are handled through session resumption and fallback mechanisms defined in the spec.

The Future of MCP in the AI Ecosystem

As of 2026, MCP continues to evolve with community contributions. Upcoming enhancements include richer multi-modal resource support and tighter integration with emerging agent orchestration frameworks. Major platforms have committed to the standard, positioning MCP as the foundational layer for the next generation of context-aware, action-taking AI.

Conclusion

MCP transforms AI from isolated chatbots into connected, capable agents that understand your data and act on your behalf. Whether you are an individual user, developer, or enterprise architect, adopting MCP unlocks a new level of intelligence and productivity.

Start exploring today at the official documentation site and experiment with pre-built servers to experience the difference firsthand. The era of truly integrated AI has arrived.

Continue Reading

More articles connected to the same themes, protocols, and tools.

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.