MCP Full Form: What is Model Context Protocol and Why It Powers 2026 AI Agents

Key Takeaways

- MCP full form: Model Context Protocol — an open standard introduced by Anthropic in November 2024 for connecting AI models to external tools, data sources, and systems.

- It standardizes how large language models (LLMs) discover, access, and interact with capabilities like databases, file systems, APIs, and development environments.

- Unlike traditional tool calling, MCP uses a client-server architecture with JSON-RPC, reducing fragmentation and context bloat while improving security and scalability.

- MCP complements protocols like A2A (Agent-to-Agent) and powers real-world AI agents in coding, data analysis, and automation.

- Adoption has accelerated in 2025–2026, with support from Claude, Cursor, Gemini, and major cloud providers.

What Does MCP Stand For?

MCP stands for Model Context Protocol.

It is an open-source standard designed to solve a core limitation of large language models: their isolation from live data and external systems. Before MCP, developers built custom integrations for every tool, leading to duplicated effort, inconsistent security, and bloated context windows.

Analysis shows that MCP provides a universal “language” for AI applications to communicate with external resources, enabling dynamic, context-aware behavior without hard-coded schemas for each integration.

The Problem MCP Solves

Large language models excel at reasoning within their training data but struggle with real-time information and actionable tasks. Traditional approaches relied on:

- Manual function/tool calling with per-tool JSON schemas

- Retrieval-Augmented Generation (RAG) for static knowledge

- Custom API wrappers that break when services change

These methods scale poorly. Benchmarks indicate that custom integrations increase development time by 3–5x and raise security risks due to inconsistent permission models.

MCP addresses this by introducing a standardized protocol layer. AI clients (e.g., Claude Desktop or custom agents) connect to MCP servers that expose tools, resources, and prompts in a consistent format.

How Model Context Protocol Works

MCP follows a client-server model:

- MCP Client: Embedded in AI applications (e.g., Claude, Cursor, or agent frameworks). It discovers available servers and invokes tools.

- MCP Server: A lightweight program that wraps external systems (PostgreSQL, GitHub, file systems, uv package manager, etc.) and translates requests into standardized JSON-RPC 2.0 calls.

- Transports: Supports stdio, HTTP/SSE, and WebSocket for flexibility in desktop, cloud, or container environments.

Core Components

- Tools: Executable functions with input schemas and descriptions.

- Resources: Readable data sources (files, database tables, API endpoints).

- Prompts: Reusable instruction templates for consistent agent behavior.

When an AI agent needs to act, it sends a request to the MCP server. The server executes the operation safely (often with read-only modes or scoped permissions) and returns structured results. This keeps the model’s context window lightweight — agents call tiny CLI binaries or servers instead of embedding massive schemas.

Key Benefits of MCP

- Standardization: One integration unlocks an ecosystem of MCP-compatible servers.

- Security: Granular permissions, read-only transactions, and least-privilege execution reduce risks compared to raw function calling.

- Efficiency: Reduces context bloat; community feedback suggests up to 40-60% lower token usage in tool-heavy workflows.

- Discoverability: Automatic server and tool discovery from IDE configs (Claude, Cursor, VS Code).

- Reusability: Developers build once; agents across vendors (Claude, Gemini, OpenAI Responses API) can consume the same servers.

MCP vs Traditional Tool Calling and RAG

| Aspect | Traditional Tool Calling | RAG | Model Context Protocol (MCP) |

|---|---|---|---|

| Focus | Custom per-tool schemas | Knowledge retrieval | Standardized tool + data access |

| Action Capability | Limited, brittle | Read-only | Read/write with safety controls |

| Scalability | Poor (N integrations) | Good for static data | Excellent (ecosystem of servers) |

| Security | Varies by implementation | Moderate | Built-in permissions and scoping |

| Context Overhead | High (full schemas) | Medium | Low (discovery + lightweight calls) |

MCP goes beyond RAG by enabling actions (e.g., updating a database or running uv sync) while maintaining stronger safety boundaries.

MCP vs A2A: Complementary Protocols

- MCP (Model Context Protocol): Vertical integration — equips a single agent with tools and data.

- A2A (Agent-to-Agent): Horizontal collaboration — enables multiple agents to delegate tasks, share state, and orchestrate workflows.

Many production systems use both: agents leverage MCP servers for capabilities and A2A for inter-agent coordination. This layered approach supports complex multi-agent systems without tight coupling.

Real-World Use Cases and Ecosystem

- AI Coding Assistants: Tools like

uv-mcp(Astral uv wrapper) orpostgres-mcplet agents diagnose environments, install dependencies, or tune database indexes via natural language. - Data Analysis: Secure read-only access to PostgreSQL, BigQuery, or internal APIs.

- Development Workflows: File system access, Git operations, and CI/CD integrations within IDEs like Cursor or Claude Code.

- Enterprise Automation: Business tools (CRM, linear, Figma) exposed safely to agents.

Popular MCP servers in 2026 include database connectors, package managers, browser automation, and design tool integrations. Toolkits like MCPorter provide TypeScript runtimes, CLI generation, and discovery to accelerate adoption.

Advanced Tips and Common Pitfalls

- Security Best Practices: Always prefer read-only modes for untrusted agents. Use scoped permissions and network restrictions. Avoid exposing raw SQL execution without validation.

- Performance: Keep MCP servers lightweight; use connection pooling and caching for stateful tools (e.g., browser sessions).

- Edge Cases: Handle long-running tasks with streaming responses. Test across transports (stdio vs HTTP) for desktop vs cloud deployments.

- Pitfalls to Avoid: Over-exposing dangerous operations, ignoring schema evolution, or neglecting logging/auditing in production.

- Scaling Tip: Combine MCP with code execution patterns — agents generate and run small scripts via MCP rather than direct tool calls for better reliability.

Community implementations have matured rapidly, with Docker images, official docs at modelcontextprotocol.io, and growing support across vendors.

Conclusion

The MCP full form — Model Context Protocol — represents a foundational shift in how AI systems interact with the real world. By standardizing connections between models and external capabilities, it reduces fragmentation, enhances security, and unlocks more capable, reliable AI agents.

As adoption grows in 2026, organizations building agentic workflows should evaluate MCP-compatible tools and servers early. Start by exploring the official specification and experimenting with popular servers for your stack.

Ready to implement MCP? Check the open-source resources and begin integrating today to future-proof your AI applications.

Continue Reading

More articles connected to the same themes, protocols, and tools.

What Is Oh My Claude Code? The Multi-Agent Plugin That Turns Claude Code Into a Full Development Team

Ostris AI Toolkit Guide: The Practical LoRA Training Suite for FLUX, Qwen, Z-Image, Wan, and Modern Diffusion Models

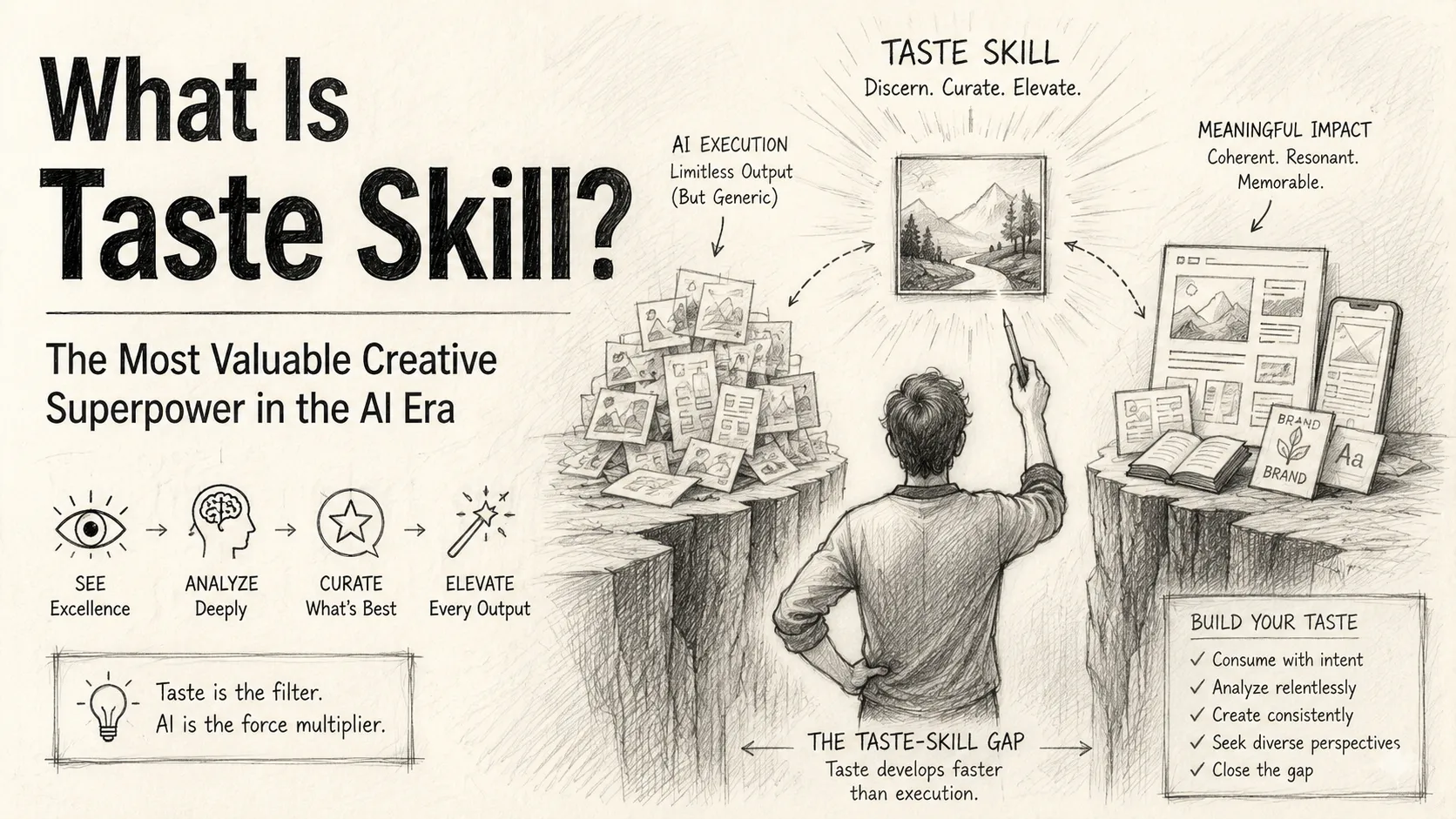

What Is Taste Skill? The Most Valuable Creative Superpower in the AI Era

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.