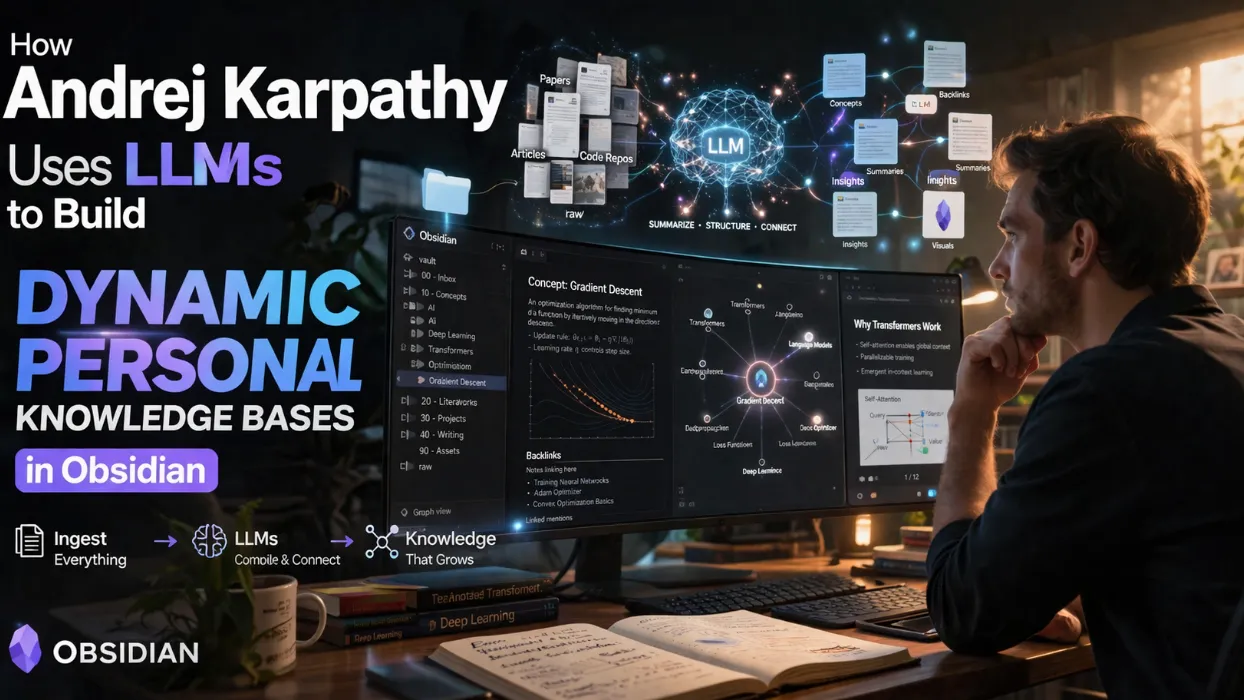

How Andrej Karpathy Uses LLMs to Build Dynamic Personal Knowledge Bases in Obsidian

Key Takeaways

- Andrej Karpathy's system ingests raw documents (papers, articles, repos, images) into a

raw/directory, then uses LLMs to incrementally compile them into a structured Markdown wiki with summaries, backlinks, concept articles, and interconnections. - Obsidian serves as the lightweight frontend for viewing raw data, compiled wiki, and generated outputs like Marp slides or Matplotlib plots, with the LLM handling nearly all writing and maintenance.

- At scale (~100 articles, ~400K words), complex Q&A occurs with minimal RAG reliance; the LLM auto-maintains indexes and summaries for efficient context retrieval.

- Linting via LLM health checks identifies inconsistencies, imputes missing data, suggests connections, and proposes new articles, ensuring data integrity.

- Outputs extend beyond text to rendered Markdown, slides, visualizations, or dynamic HTML, often filed back into the wiki to compound knowledge over time.

- Community adoption highlights extensions like agent separation for contamination control, synthetic data for fine-tuning, and ephemeral wikis spawned per query.

The Shift from Code to Knowledge Manipulation

Analysis shows a fundamental change in token allocation: recent frontier LLMs excel at knowledge synthesis over pure code generation. Karpathy reports a large fraction of his token throughput now manipulates structured knowledge stored as Markdown files and images rather than ephemeral terminal outputs.

This workflow transforms passive research consumption into an active, self-improving knowledge base. Raw sources accumulate in a dedicated directory. An LLM then "compiles" them incrementally—generating summaries, categorizing content into concepts, authoring linked articles, and establishing backlinks.

Benchmarks from similar personal systems indicate that once a wiki reaches critical mass, query complexity scales dramatically without proportional increases in retrieval overhead.

Data Ingestion and Compilation Process

The pipeline begins with targeted collection:

- Source Handling: Research papers, articles, GitHub repos, datasets, and images land in

raw/. Web content converts to Markdown via Obsidian Web Clipper, with images downloaded locally for direct LLM reference. - Incremental Compilation: LLMs process new documents one-by-one initially, then pattern-match for efficiency. Instructions like "file this new doc to our wiki" trigger categorization, summarization, and linking.

- Structure Creation: The resulting wiki features:

- Per-document summaries

- Concept-level articles

- Bidirectional backlinks

- Directory-based organization

Community feedback suggests batch processing or multi-stage pipelines improve directory decisions for larger ingests, though Karpathy keeps early stages human-in-the-loop for quality.

Obsidian as the Ideal Frontend

Obsidian functions as a minimal "IDE" for the system:

- Simultaneous views of raw sources, compiled wiki, and visualizations.

- Plugins like Marp enable slide rendering directly from LLM-generated Markdown.

- Graph views and backlink navigation reveal emergent connections.

Experts note Obsidian's local-first Markdown foundation minimizes lock-in while supporting custom tools. Alternatives like VS Code with Markdown extensions exist, but Obsidian's ecosystem accelerates visual and interactive exploration.

Separation strategies emerge in community implementations: maintain a high-signal personal vault alongside an agent-facing "messy" vault to prevent contamination from generated content.

Advanced Q&A and Output Generation

Once scaled, the wiki supports sophisticated querying:

- LLMs navigate the full corpus, leveraging self-maintained indexes and summaries.

- At ~400K words, context windows handle related clusters efficiently without heavy vector RAG.

- Outputs adapt to needs: Markdown reports, Marp slideshows, Matplotlib figures, or even dynamic HTML/JS for interactive filtering and visualizations.

Generated artifacts often feed back into the wiki, creating a compounding loop where explorations enhance future queries. Lex Fridman and others report similar setups for podcast research or on-the-go voice interactions via temporary mini-wikis.

LLM-Driven Linting and Maintenance

A standout feature is automated "health checks":

- Detect inconsistent claims across sources ingested weeks apart.

- Impute gaps using web search tools.

- Identify novel connections and candidate articles.

- Suggest follow-up questions to deepen coverage.

This turns the wiki from a static repository into a living research partner. Stale data risks rise with growth; versioned audits and incremental updates mitigate drift more effectively than one-time ingests.

Emerging Tools and Future Explorations

Users extend the core with:

- Custom CLIs or naive search engines handed to LLMs as tools.

- Synthetic data generation paired with fine-tuning to embed wiki knowledge into model weights.

- Ephemeral wiki generation: a single query spawns a full, linted, iterated knowledge base before final reporting—far beyond simple decoding.

Architectural diagrams shared in the community visualize stages from ingest through compilation, querying, and enhancement. Products bridging this for non-developers represent a clear opportunity, as every organization maintains unstructured "raw/" data awaiting compilation.

Comparisons to traditional PKMs (personal knowledge management) highlight advantages: LLM automation reduces manual curation by 80-90% in active research domains, while backlinks and graphs surface insights humans might miss.

Challenges and Best Practices

- Scale Management: Summaries can stale; prioritize fresh diffs and audits.

- Contamination Control: Isolate agent-generated content until vetted.

- Incremental Adoption: Start small, let patterns emerge before full autonomy.

- Tooling Simplicity: Flat Markdown directories with

AGENTS.mdschemas suffice; over-engineering delays value.

Actionable insight: Begin with one research topic. Collect 10-20 sources, prompt an LLM to compile the initial wiki, then iterate queries and linting. Measure value by query depth and time saved versus traditional search/note-taking.

Conclusion

Andrej Karpathy's LLM-powered knowledge base workflow marks a practical evolution in how researchers and practitioners interact with information. By delegating compilation, maintenance, and synthesis to capable models while retaining Obsidian for intuitive interaction, users achieve deeper understanding with less friction.

This approach compounds over time: every query strengthens the base, every linting pass raises integrity. As frontier models advance, expect broader tools that automate entire ephemeral wikis from natural questions.

Implement a minimal version today—ingest your next research batch and let an LLM build the foundation. The shift from consuming knowledge to actively cultivating it could redefine personal and organizational intelligence in the agentic era.

Start small, iterate relentlessly, and watch your personal wiki evolve into a true intellectual multiplier.

Continue Reading

More articles connected to the same themes, protocols, and tools.

Ostris AI Toolkit Guide: The Practical LoRA Training Suite for FLUX, Qwen, Z-Image, Wan, and Modern Diffusion Models

Anthropic Mythos AI Unauthorized Access: How a Discord Group Breached the 'Too Dangerous' Cybersecurity Model

How to Fix 'Computer Use Plugin Unavailable' in OpenAI Codex App

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.