FastMCP vs Graphiti MCP: Framework vs Specialized Memory Server

Quick Comparison

| Aspect | FastMCP | Graphiti MCP |

|---|---|---|

| Type | Python framework for building any MCP server | Ready-to-use MCP server for temporal knowledge graphs |

| GitHub Stars | 22,898 (#14 on MCP leaderboard) | 24,735 (#13 on MCP leaderboard) |

| Primary Purpose | Expose tools, prompts, resources, and UIs to LLMs | Provide persistent, time-aware memory for agents |

| Core Strength | Rapid custom development with zero boilerplate | Low-latency hybrid search and relationship tracking |

| Performance | Python-based; caching and middleware for scale | Sub-200ms P95 retrieval at scale (hybrid semantic + graph) |

| Pricing | Fully open-source; optional free hosting | Fully open-source; self-hosted |

| Setup Time | Minutes for basic server | Docker + DB config (Neo4j/FalkorDB) |

| Best For | Custom tool integrations | Cross-client agent memory |

Both tools operate within the Model Context Protocol (MCP) ecosystem, enabling seamless connections to clients like Claude Desktop and Cursor. FastMCP accelerates building MCP servers. Graphiti MCP delivers a production-ready memory server.

Performance

FastMCP** excels at general-purpose MCP workloads. Its Pythonic decorators wrap functions into tools with automatic schema generation. Version 2.x adds built-in response caching and storage middleware, delivering instant wins on repeated calls. Independent MCP benchmarks (identical 1 CPU/1 GB containers, 50 concurrent users) showed Python implementations (including FastMCP) handling sustained loads effectively, though raw throughput lagged Go/Java in CPU-heavy or I/O-bound scenarios by up to 93× in extreme cases. Production optimizations like middleware make it suitable for most real-world agent tool use.

Graphiti MCP is optimized specifically for memory retrieval. It builds temporal knowledge graphs that track entity evolution over time. Hybrid search (semantic embeddings + keyword + graph traversal) returns results in sub-200ms P95 latency at scale with no LLM calls during retrieval. Incremental updates keep graphs current without full rebuilds. Real-world deployments report consistent sub-second query times even on large, multi-tenant graphs.

Trade-off: Choose FastMCP when you control the workload and can add caching. Choose Graphiti MCP when low-latency, relationship-aware memory is the bottleneck (e.g., reducing hallucinations in long-running agents).

Pricing

Both are 100% open-source under permissive licenses (FastMCP via Prefect ecosystem; Graphiti under Apache 2.0).

- FastMCP: No usage fees. Prefect Horizon provides free hosting tiers for FastMCP-based servers. Enterprise auth (Google, Azure, Auth0, etc.) is built-in at no cost.

- Graphiti MCP: No usage fees. Run locally or in Docker with your own Neo4j, FalkorDB, Kuzu, or Amazon Neptune. Optional Zep cloud service (built on Graphiti) exists but is not required.

Trade-off: Zero licensing cost for both. Operational cost depends on your database choice (Graphiti) or hosting (FastMCP).

Ease of Use

FastMCP prioritizes developer speed: python from fastmcp import FastMCP mcp = FastMCP("my-server") @mcp.tool def calculate_fibonacci(n: int) -> int: """Compute Fibonacci number""" ... if name == "main": mcp.run() A single decorator handles schema, validation, docs, and protocol compliance. Clients connect via URL with full transport negotiation. Interactive UIs (charts, forms, dashboards) are generated with one flag.

Graphiti MCP offers plug-and-play memory once configured:

- Docker deployment with Neo4j/FalkorDB.

- Exposes ready MCP tools: add_episode, search_memory_nodes, manage_groups.

- Multi-tenancy via group_id prevents data leaks.

- Supports 6+ LLM providers and embedders out of the box.

Trade-off: FastMCP wins for Python developers building custom logic. Graphiti MCP wins for teams wanting instant memory without writing graph extraction code.

Ecosystem and Integrations

FastMCP powers ~70% of MCP servers across languages (1M+ daily downloads). It includes:

- Full client libraries and proxying.

- OAuth/enterprise auth out of the box.

- Prefab UI components for interactive apps inside conversations.

- Seamless integration with Prefect workflows and any MCP client.

Graphiti MCP integrates natively with:

- AI IDEs: Claude Desktop, Cursor (persistent memory across apps).

- Frameworks: LangGraph for agentic memory.

- Databases: Neo4j (default), FalkorDB, Kuzu, Neptune.

- LLMs: OpenAI, Anthropic, Gemini, Groq, Azure, Ollama.

- Hundreds of thousands of weekly active users via MCP.

Trade-off: FastMCP is the “build anything” foundation. Graphiti MCP is the “memory layer” that works immediately with existing MCP clients. Many teams combine them: use FastMCP to expose custom tools while routing memory calls to Graphiti MCP.

Which Should You Choose?

Choose FastMCP if:

- You need to expose custom tools, APIs, databases, or UIs to LLMs.

- Your team is Python-first and values rapid iteration.

- You want full control over server architecture, auth, and deployment.

- Example: Building an internal company data connector or interactive dashboard tool.

Choose Graphiti MCP if:

- Your agents need persistent, evolving memory that understands relationships and timelines.

- You want zero-code memory across Claude, Cursor, and custom agents.

- You prioritize sub-second retrieval with multi-tenancy.

- Example: Long-running coding agents, customer support bots, or research assistants that “remember” project history.

Choose both for maximum flexibility: Deploy Graphiti MCP as your memory backend and use FastMCP to build additional domain-specific tools. The MCP standard ensures they interoperate without glue code.

The decision ultimately hinges on whether your bottleneck is custom integration speed (FastMCP) or reliable agent memory (Graphiti MCP). Both represent mature, battle-tested options in the growing MCP ecosystem as of April 2026.

Continue Reading

More articles connected to the same themes, protocols, and tools.

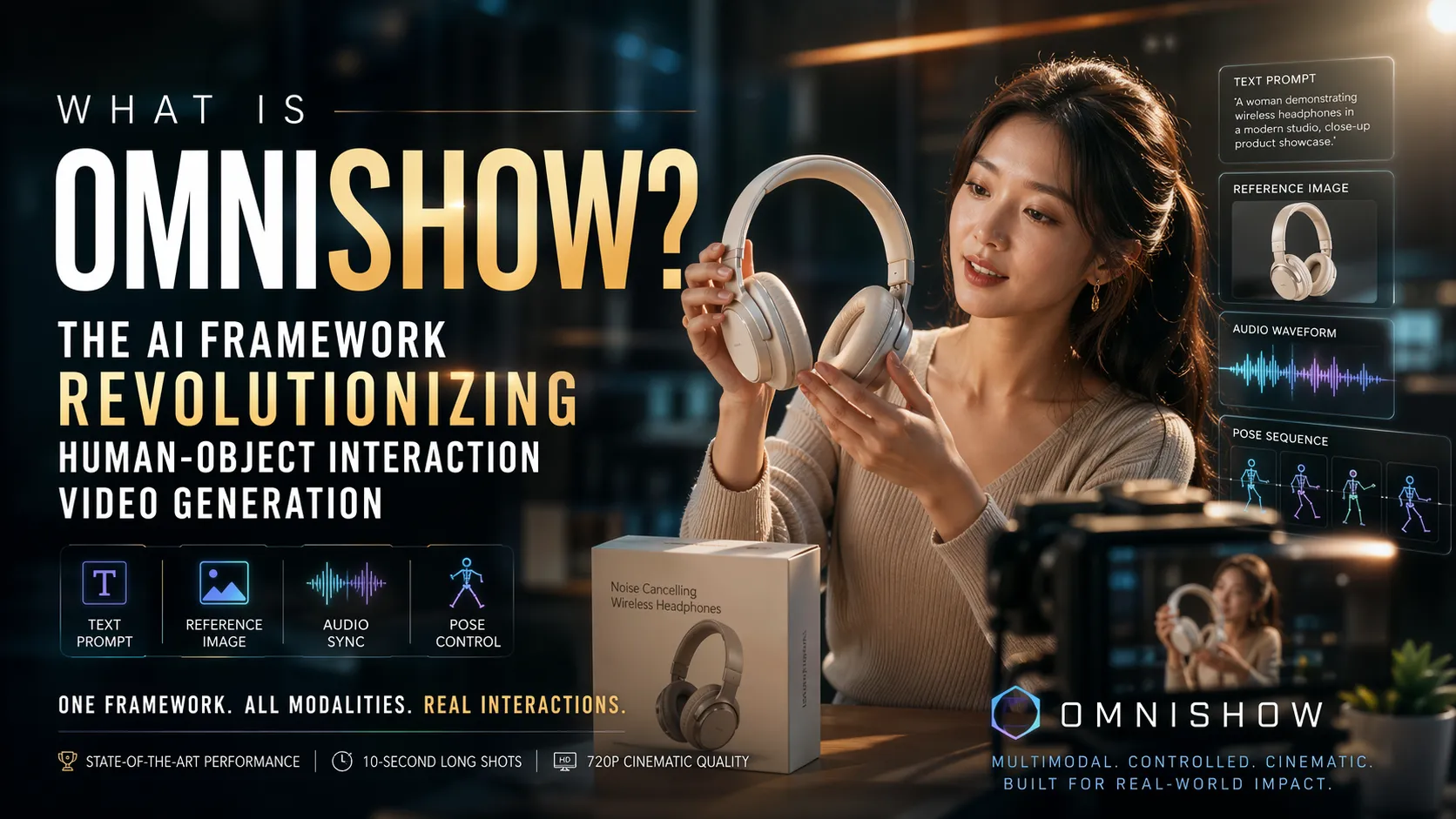

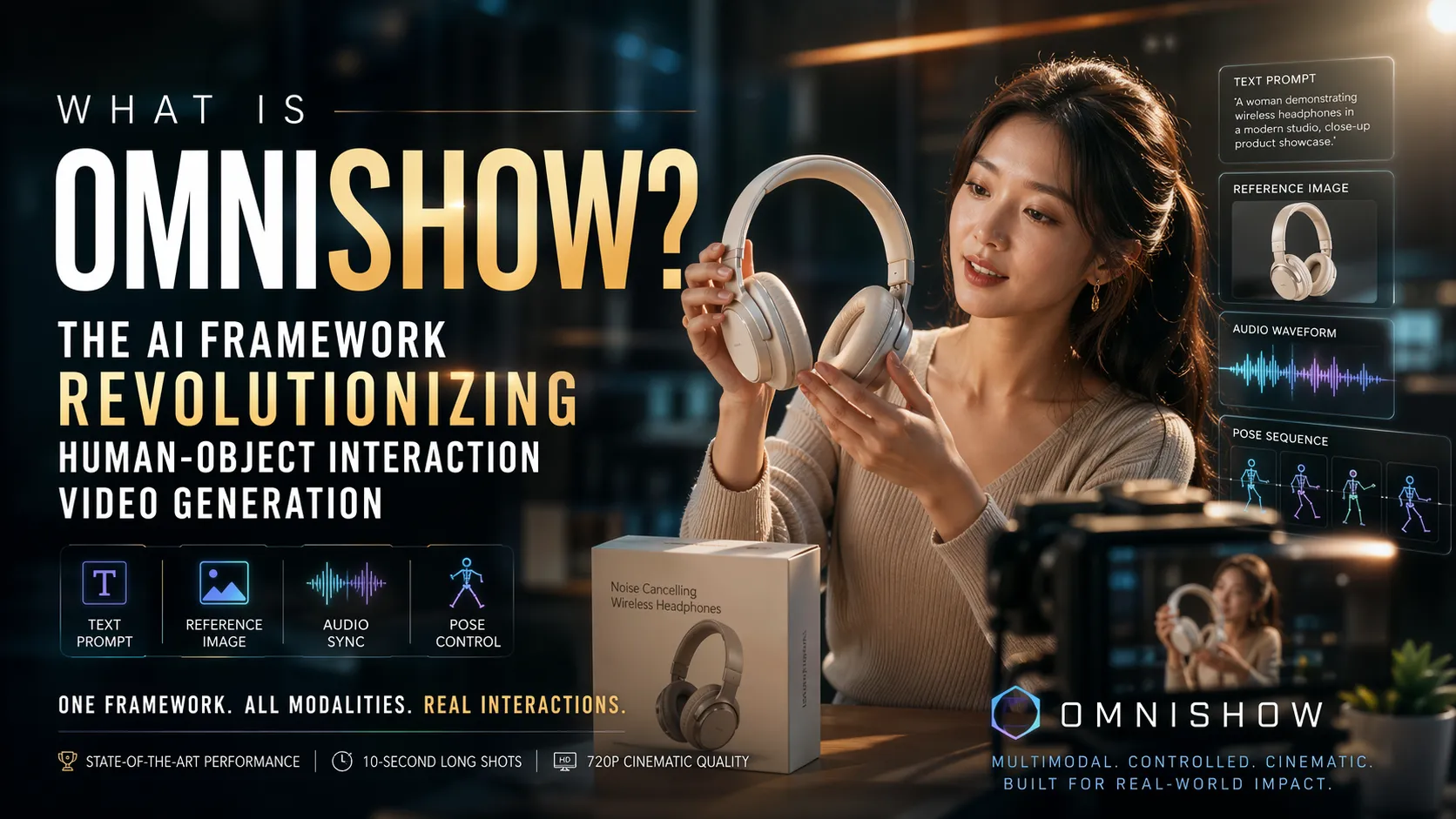

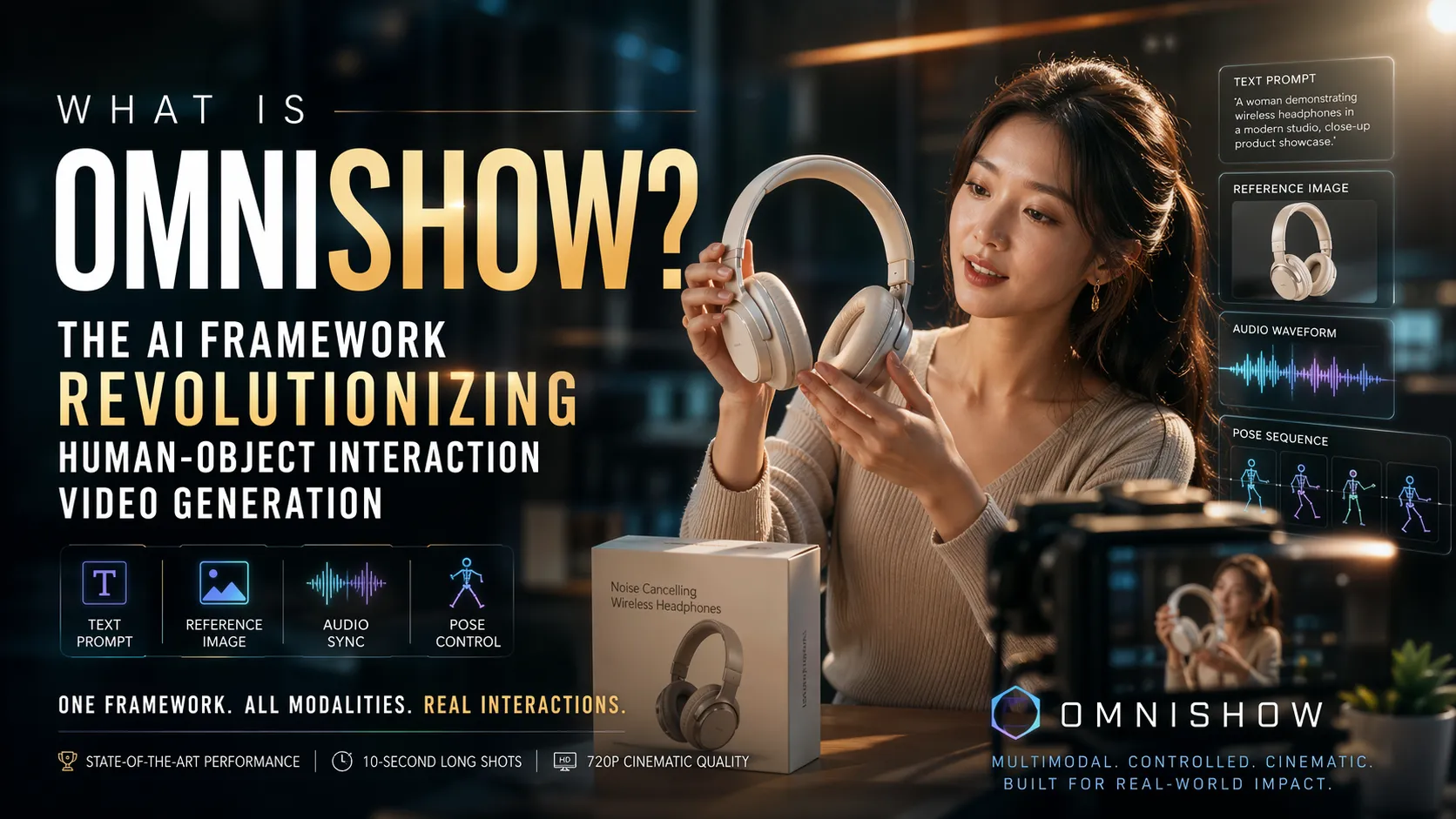

Was ist OmniShow? Das KI-Framework, das die Generierung von Human-Object-Interaction-Videos revolutioniert

O que é OmniShow? O Framework de IA que Revoluciona a Geração de Vídeos de Interação Humano-Objeto

What Is OmniShow? The AI Framework Revolutionizing Human-Object Interaction Video Generation

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.