Seed3D 2.0: ByteDance's Next-Gen 3D Model Just Dropped – Full Breakdown & Benchmarks

Key Takeaways

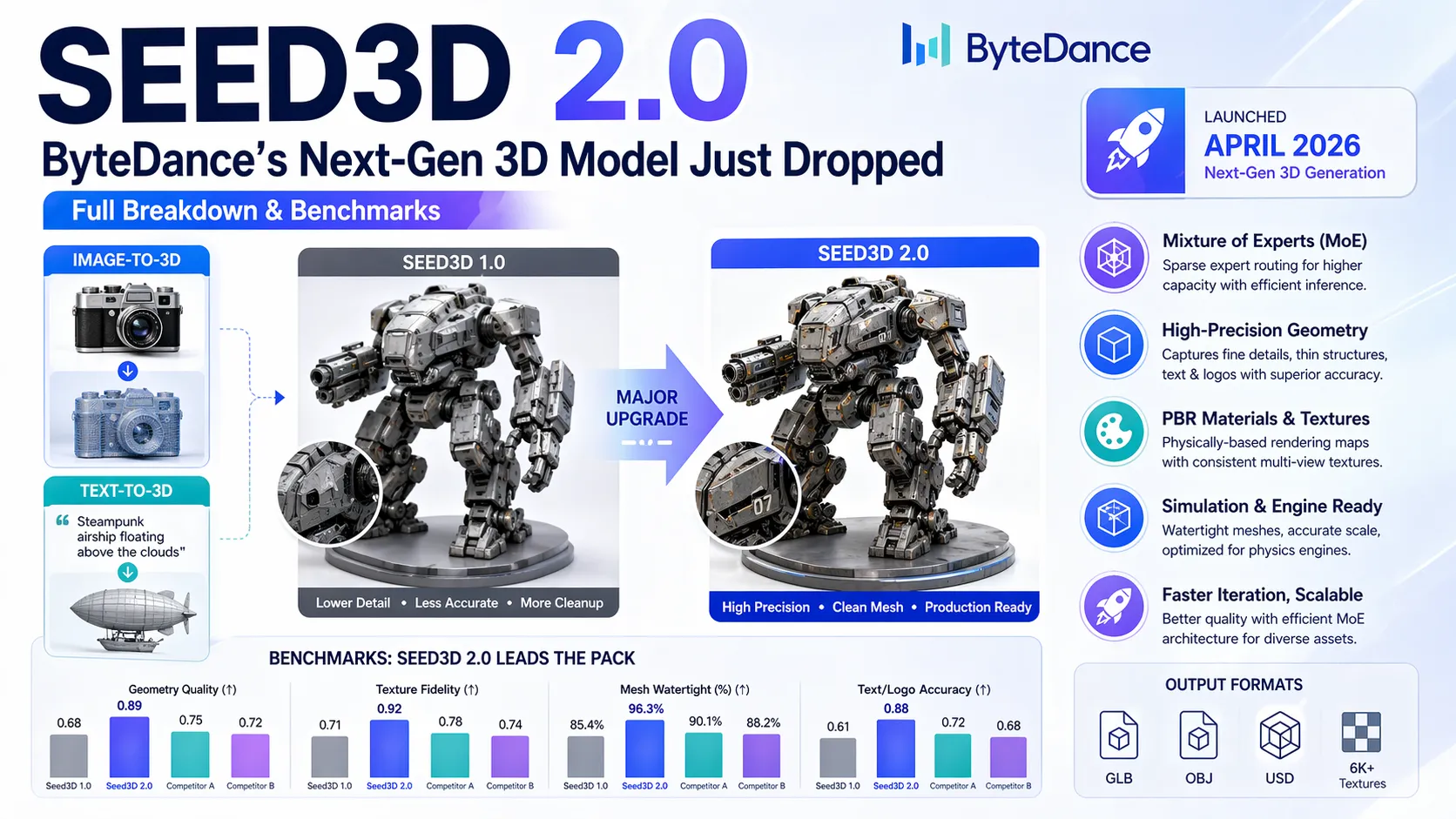

- Seed3D 2.0 is ByteDance's latest high-precision 3D foundation model, released April 2026, featuring a Mixture of Experts (MoE) architecture for superior geometric accuracy and material generation.

- It supports both image-to-3D and text-to-3D workflows, producing simulation-ready assets with watertight meshes, consistent multi-view textures, and physically-based rendering (PBR) materials.

- Analysis shows significant gains over Seed3D 1.0 in fine detail reconstruction (thin structures, text/logos), texture fidelity, and physics compatibility, positioning it among top contenders for embodied AI, XR, and game development.

- Community feedback highlights faster iteration for complex objects while maintaining scalability through sparse expert routing.

- Key output formats include GLB, OBJ, and USD with up to 6K+ textures, minimizing post-processing needs.

What Is Seed3D 2.0?

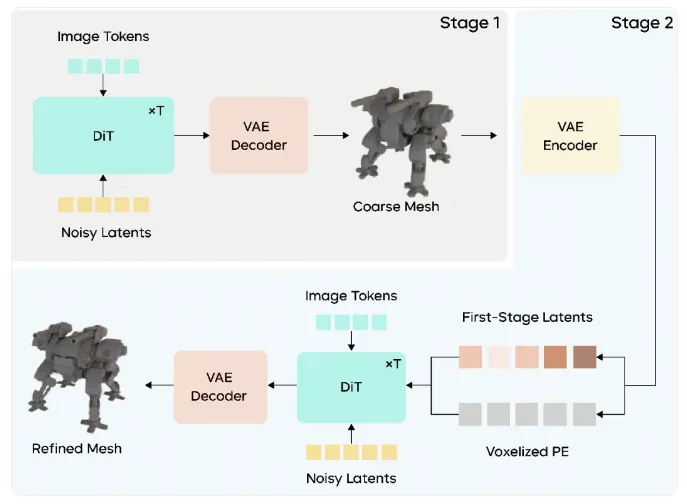

Seed3D 2.0 represents ByteDance's upgraded 3D generative foundation model, building directly on the October 2025 Seed3D 1.0 release. The core innovation lies in its MoE architecture, which scales model capacity via sparse expert routing without proportionally increasing inference costs. This allows the model to handle diverse object categories with higher precision in geometry and material decomposition.

Unlike earlier versions focused primarily on single-image reconstruction, Seed3D 2.0 enhances semantic understanding for text prompts while refining the image-to-3D pipeline. It generates assets optimized for physics engines — watertight meshes, accurate scale estimation, and PBR maps (albedo, metallic, roughness, normal) — making them immediately usable in simulators, Unity, Unreal, or Omniverse.

Key Technical Improvements in Seed3D 2.0

Benchmarks indicate Seed3D 2.0 achieves state-of-the-art (SOTA) results in several dimensions:

- Geometry Precision: Better reconstruction of thin protrusions, holes, intricate surfaces, and fine text/logos with reduced distortion.

- Texture & Material Quality: Higher-fidelity multi-view consistent textures and more accurate PBR decomposition, reducing common issues like blurry or inconsistent shading.

- Scalability: Token-based quality control (from quick drafts to ultra-detailed outputs) via MoE routing.

- Input Flexibility: Stronger support for single or multi-image inputs, plus improved text-to-3D capabilities for prompt-driven generation.

The pipeline integrates components similar to 1.0 (geometry DiT, multi-view synthesis, PBR estimation, UV completion) but with upgraded expert modules for better handling of complex semantics and physical properties.

Seed3D 2.0 vs Seed3D 1.0 and Competitors

| Aspect | Seed3D 1.0 | Seed3D 2.0 | Tripo3D / Hunyuan3D (approx.) |

|---|---|---|---|

| Architecture | Hybrid DiT + VAE | MoE with sparse routing | Varies (often diffusion-based) |

| Geometry Fidelity | High (simulation-ready) | SOTA improvements in details | Competitive but more cleanup |

| Texture Resolution | Up to 6K PBR | Enhanced consistency & realism | 4K–8K depending on tier |

| Text-to-3D Support | Limited | Significantly improved | Strong in some alternatives |

| Inference Speed | Minutes per asset | Optimized via MoE | Varies |

| Best For | Image-to-3D simulation | High-precision production & text | General creative use |

Seed3D 2.0 narrows gaps with closed-source leaders while maintaining ByteDance's emphasis on physics rigor. It excels in scenarios requiring minimal manual retopology or material tweaking.

Use Cases and Applications

- Embodied AI & Robotics: Simulation-grade assets for training in physics engines with accurate collisions and material properties.

- Game Development: Rapid prototyping of props, characters, and environments with production-ready PBR workflows.

- XR / AR / VR: High-fidelity models that maintain visual quality across viewpoints and lighting conditions.

- Product Visualization: E-commerce or industrial design where accurate textures and geometry matter for realism.

- Multi-Object Scenes: Scalable composition guided by vision-language models for complex layouts.

Advanced Tips and Best Practices

- Input Optimization: Use high-resolution, well-lit single images with clear angles for best geometry. For multi-view, provide 3–4 complementary shots to reduce ambiguities.

- Prompt Engineering for Text-to-3D: Combine descriptive material terms ("matte plastic", "brushed metal") with style references to leverage improved semantic understanding.

- Quality Scaling: Start with lower token counts for rapid iteration, then upscale specific regions for final assets.

- Post-Processing Edge Cases: While minimal cleanup is needed, complex transparent or highly reflective materials may still benefit from manual PBR adjustments in tools like Substance Painter.

- Integration: Export in USD for Omniverse or GLB for web/XR pipelines. Test immediately in target physics engines to verify collision meshes.

Common Pitfalls to Avoid:

- Overly complex or occluded input images leading to hallucinated geometry.

- Ignoring scale estimation — always verify real-world dimensions when accuracy is critical.

- Expecting perfect topology on extremely organic or furry surfaces without refinement.

Conclusion

Seed3D 2.0 pushes the boundaries of accessible, high-precision 3D generation with its MoE-driven advancements in geometry, textures, and simulation compatibility. For developers and creators needing reliable assets without traditional modeling bottlenecks, it offers a compelling upgrade path from Seed3D 1.0 and competitive alternatives.

Head to the official Seed3D platform or integrated services like seed3dai.com to experiment with the latest model. Whether building the next simulation environment or accelerating game asset pipelines, Seed3D 2.0 delivers the precision and scalability demanded in 2026 AI-driven 3D workflows.

Continue Reading

More articles connected to the same themes, protocols, and tools.

Referenced Tools

Browse entries that are adjacent to the topics covered in this article.